5 Levels of AI Testing Autonomy

The driverless vehicle levels were defined by SAE (Society of Automobile Engineers) some years ago as a way of defining the actual capabilities of driverless vehicles. Today, most driverless automation is level 2 or 3 (ie Tesla), but Waymo seems to be showing level 4 or 5 capabilities in its roll outs in Arizona. That means, with all the hype, we can measure where a car company is with their technology. No longer an arguable but a knowable.

The same is true in AI test generation for functional and performance testing.

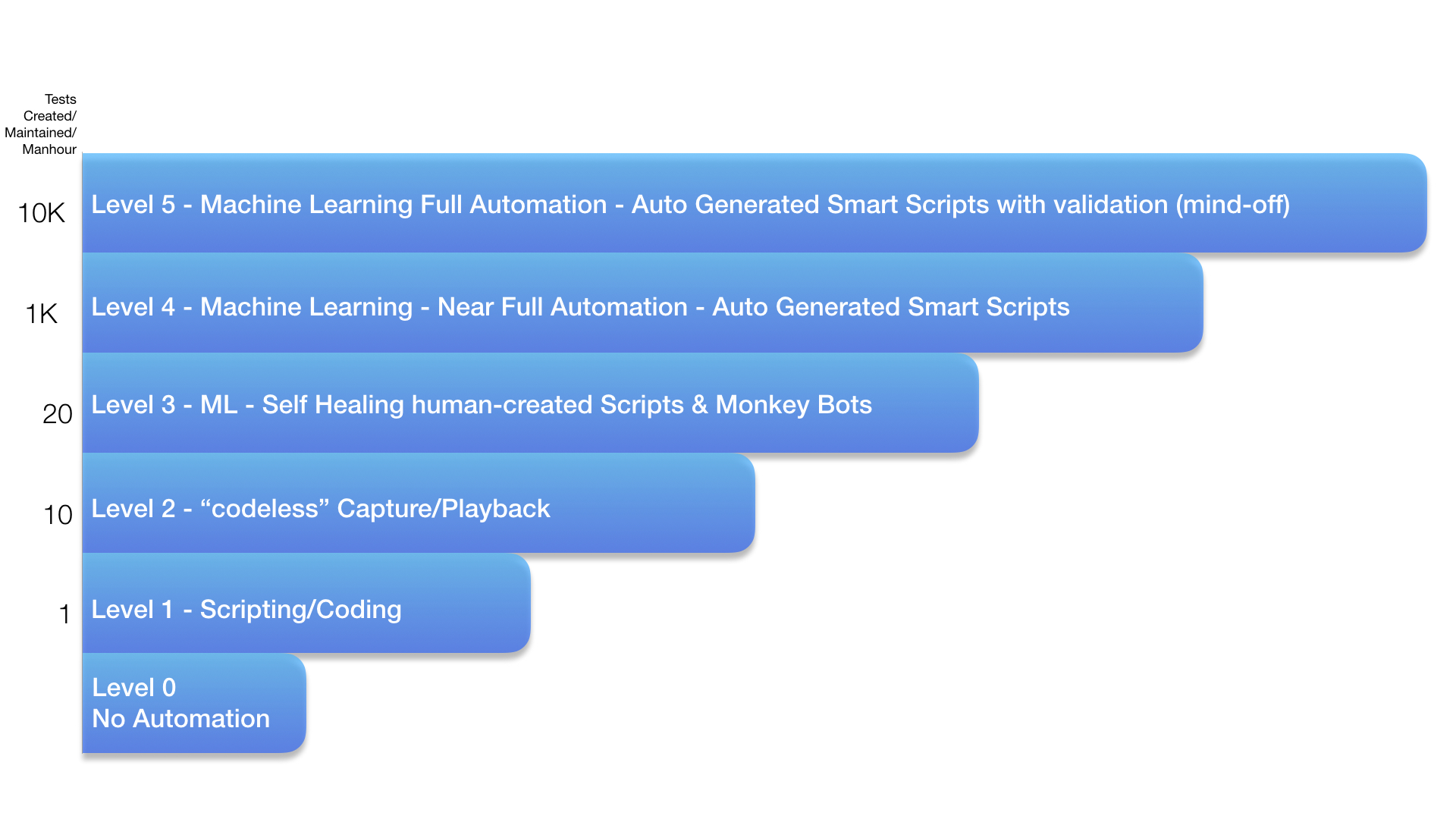

I have seen several of these proposed with no exact industry standard but this one seems like it is a good proxy for the impact AI would have at various levels. We use this at Appvance to define where we are today, and the holy-grail of functional test automation (ie Level 5). Level 0 is manual testing, still 70% of the industry today.

Level 0 is manual testing, still 70% of the industry today.

Level 1 is scripting…probably 25%.

Level 2 is codeless or recording and that’s probably the last 5%. Rough numbers.

These have changed little in 30 years.

Level 3 introduces the first hints of machine learning by self-healing scripts or monkey bots. Appvance was the first to introduce self-healing scripts in 2016. There are of course various levels in-between so one could make further progress between 2 and 3 and we might say they are at 2.5.

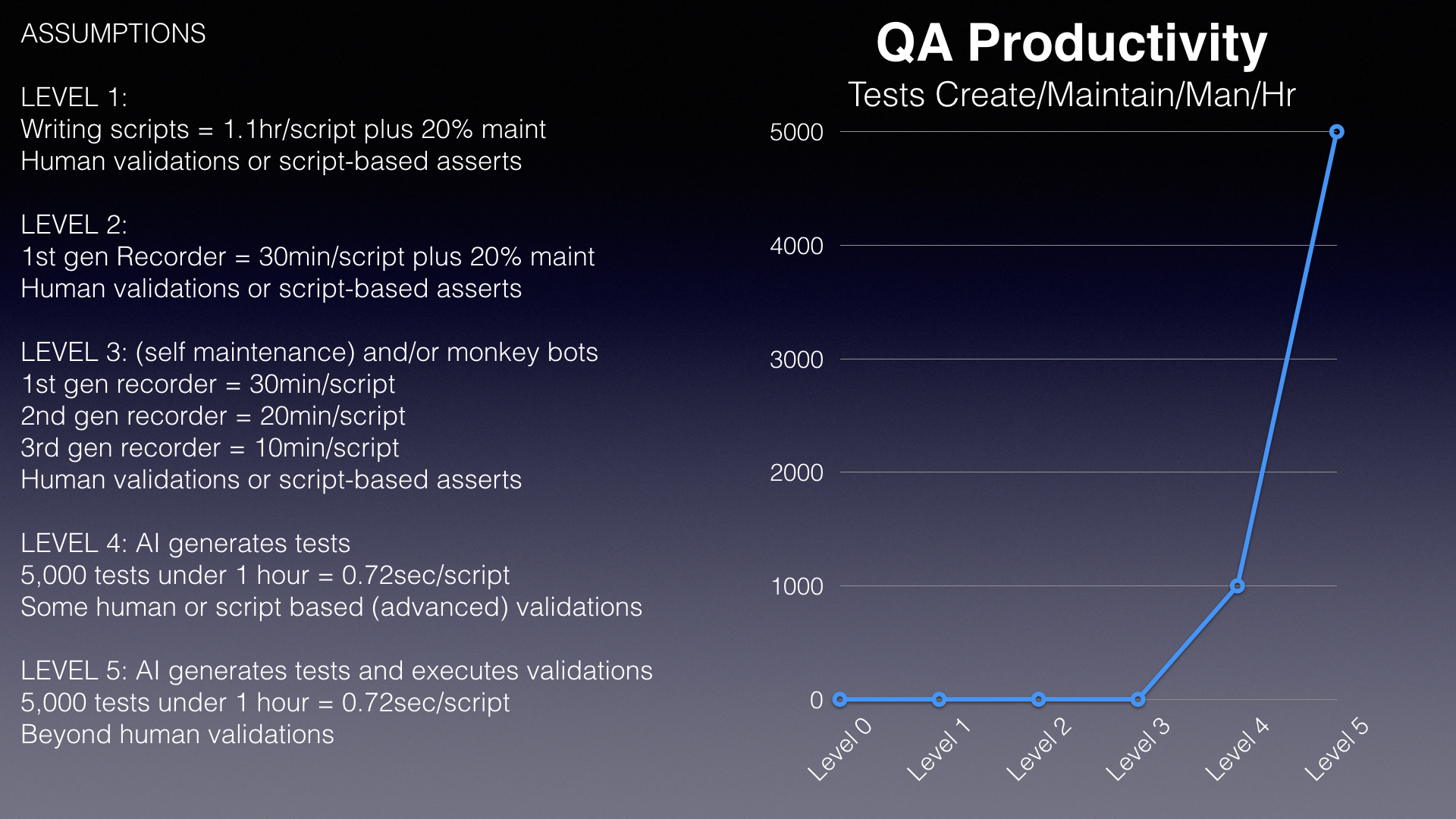

In any case, the real productivity gains happen when you finally reach level 4, which Appvance was first to announce in late 2017, and is still the only vendor offering level 4 autonomy. This is more than 2 orders of magnitude improvement in tests generated or maintained per manhour.

And finally, level 5 autonomy is the holy grail. Self-generating tests are able to validate actions or data or elements or whatever at or above human capabilities and do so autonomously.

We have modeled the team productivity gains around creating and maintaining tests, which is 85% of team effort in enterprises today. That has driven our work in AI and is a useful measure of the gains one might expect at each level of QA autonomy. Below is that slide from our client presentations, and we have enough data over the past year to say these are reasonable assumptions.

When considering AI driven testing, the most important qualification is to learn what level of autonomy a vendor is offering. There are many at level 2 and maybe one or two hinting at level 3.

As I said in an earlier post, there are many uses of AI that can add value across the QA landscape. Applitools is an excellent vendor who uses AI to recognize objects and changes in web pages. Change monitoring is a critical part of QA for ops that is often ignored. Others have modeled login techniques across thousands of applications to automatically create a login script. These certainly can offer value top organizations.

However for functional, regression, and performance testing, you need to see real productivity gains, or the boss won’t buy it. It’s one thing to try another tool for free and say “hey that’s kind of cool.” It’s another to change your continuous testing regimen to one driven by tests not created by humans, but created and executed 1000X faster. That is what level 4 and 5 are about. Getting at least the same results you get today at a much faster pace and a lower cost.

Every enterprise needs continuous testing today as party of their CI/CD pipeline. A true AI driven test generation system can offer a huge boost to productivity, speed, and outcomes.

That is what AI testing must deliver before you make it part of your software development lifecycle.

Kevin Surace is CEO of Appvance.ai, the leader in AI driven software testing. He has been featured by Businessweek, Time, Fortune, Forbes, CNN, ABC, MSNBC, FOX News, and has keynoted hundreds of events, from INC5000 to TED to the US Congress. He was INC Magazines’ Entrepreneur of the Year, a CNBC top Innovator of the Decade, World Economic Forum Tech Pioneer, Chair of Silicon Valley Forum, Planet Forward Innovator of the Year nominee, featured for 5 years on TechTV’s Silicon Spin, and inducted into RIT’s Innovation Hall of Fame. Mr. Surace led pioneering work on the first cellular data smartphone (AirCommunicator) and the first human-like AI virtual assistant (General Magic), and has been awarded 82 worldwide patents.