Tag: Test Strategy

For decades, QA automation has relied on human-created scripts, recorders, and manual maintenance. Even with assistive AI sprinkled into legacy tools, the burden has stayed the same: people must still author, update, and debug test cases one by one. That model simply cannot keep up with modern software velocity. With Appvance IQ (AIQ), AI becomes

This is the fifth #BestPractices blog post of a series, by Kevin Parker. Excellent application performance and reliability is crucial in today’s software-dependent business environment. That’s why load testing — simulating realistic user loads to assess application performance — is a cornerstone of quality assurance. However, load testing can be resource-intensive, both in terms of time

The Quantum Leap that AI Can Provide to Testing The buzz around ChatGPT and GPT4, the latest release of the large language model from Open AI, has not abated since it burst onto the tech scene several months ago. Many dev and testing teams are experimenting with leveraging the model to automatically write test scripts.

With the growth and evolution of software, the need for effective testing has grown exponentially. Testing today’s applications requires an immense number of complex tasks, as well as a comprehensive understanding of the application’s architecture and functionality. A successful test team must have strong organizational skills to coordinate their efforts and time to ensure that

Generative AI is a rapidly growing field with the potential to revolutionize software testing. By using AI to generate test cases, testers can automate much of the manual testing process, freeing up time to focus on more complex tasks. One of the leading providers of generative AI for software QA is Appvance. Appvance’s platform uses

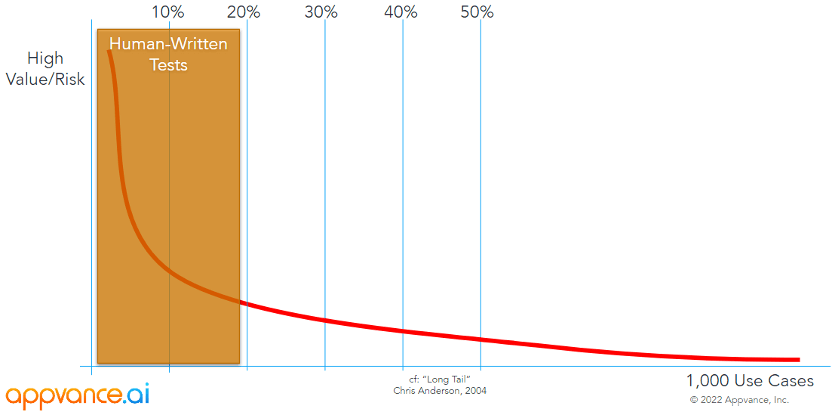

As business becomes increasingly digitized, it’s critical for teams to produce better quality, even as the complexity of applications to run your business on increases. And now do so in an hour or less. And in fact, you are going to have to be 800 times more productive (from a QA standpoint) if you want

Don Rumsfeld, former U. S. Secretary of Defense, famously said in 2002 “There are known knowns. These are things we know that we know. There are known unknowns. That is to say, there are things that we know we don’t know. But there are also unknown unknowns. There are things we don’t know we don’t

The cost of underperformance in delivering quality software is steep. In addition to interrupted in-app experiences, bugs often contribute to high rates of customer churn and can lead to a damaged brand image. Users expect highly functioning apps with no bugs or issues, and apps that don’t provide these qualities are quickly deemed irrelevant and

This post is the second in a 2-part series. As we discussed in my prior post, the Autonomous Software Testing Manifesto, AI provides you, the Automation Engineer, the power to test broadly and deeply across your application. This furnishes you with the opportunity to reevaluate your test strategy and determine what will be most effective

The advances in AI and ML now make it possible to create expert systems that know both how applications are designed and how they behave. These systems can absorb the domain-specific instructions that enable them to replicate the behaviors of experienced QA testers with years of application-specific knowledge. With the capacity to deploy artificial intelligence